A step-by-step journey through the mathematics, noise, and neural magic behind modern AI image synthesis

What looks like magic — typing a few words and watching a photorealistic image materialize — is actually a precise, deeply mathematical pipeline. Every AI-generated image passes through a chain of specialized components, each with a distinct job. Understanding these components doesn’t just satisfy curiosity; it gives you real creative control over your outputs. Let’s pull back the curtain, piece by piece.

01 — FOUNDATION

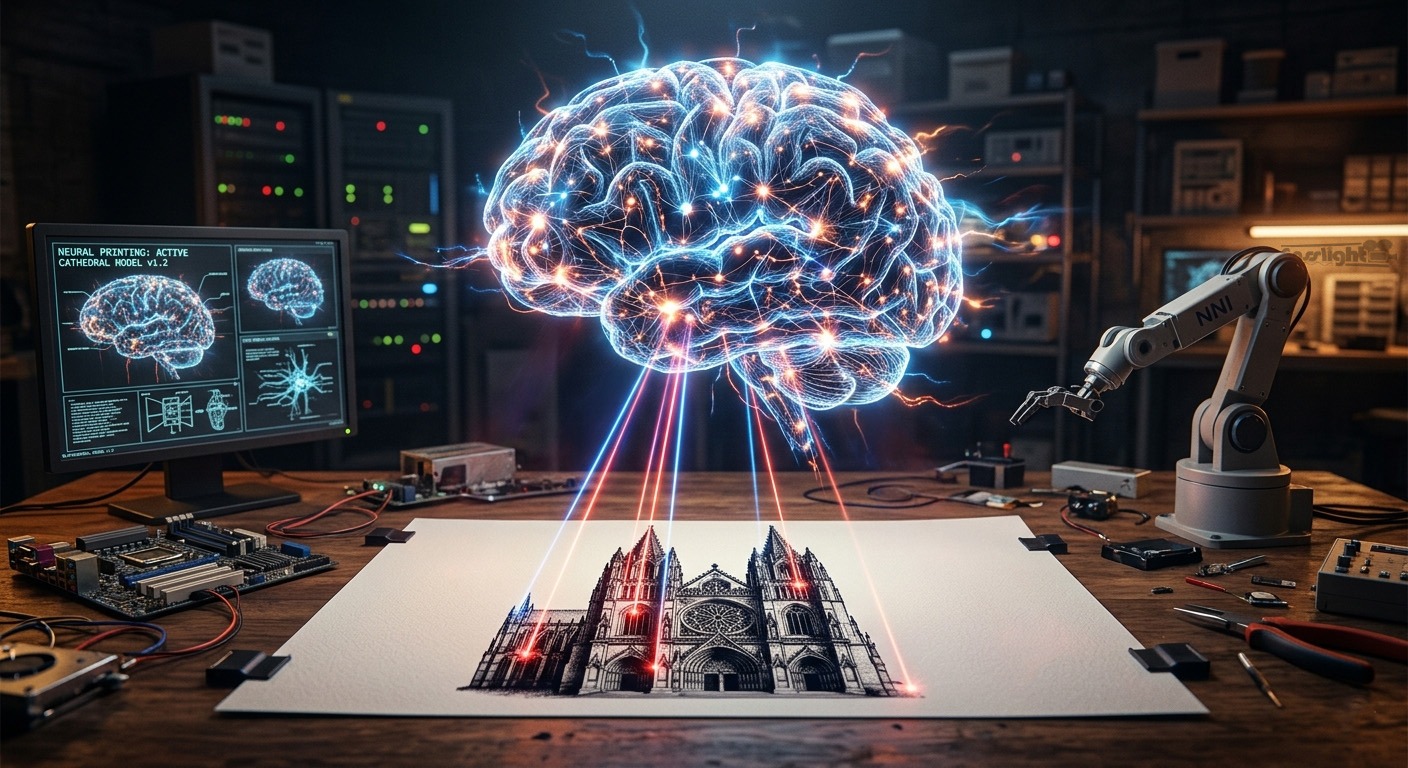

The Checkpoint: A Neural Network’s Frozen Memory

Everything begins with the model checkpoint — a large file, typically 2–7 gigabytes, containing the fully trained weights of a diffusion neural network. Think of it as the “frozen knowledge” of a system that has processed hundreds of millions of image-text pairs. The model has, in essence, learned the statistical relationship between visual content and language.

A checkpoint is not a database of images. It contains no stored pictures. Instead, it holds billions of numerical parameters that encode an abstract understanding of what concepts look like — “dog,” “cinematic lighting,” “impressionist brushwork” — and how those concepts relate to one another visually. From a single checkpoint, you can generate an effectively infinite variety of images.

Popular checkpoints range from general-purpose models to highly specialized fine-tunes: realistic photography models, anime-focused variants, architectural visualization models, and more. The checkpoint establishes the creative ceiling of your generation.

02 — LANGUAGE

CLIP & Text Encoding: Turning Words into Mathematics

Before a single pixel can be touched, the model must understand what you want. This is the job of CLIP — Contrastive Language–Image Pretraining, a system trained by OpenAI that learned to associate language with visual concepts by studying hundreds of millions of image-caption pairs.

When you write a prompt, CLIP doesn’t read it the way a human does. Instead, it converts your words into a high-dimensional numerical vector called a conditioning tensor. This tensor doesn’t store words — it stores meaning in a mathematical form the neural network can directly act upon.

The model doesn’t read your prompt. It translates your words into a precise coordinate in a vast mathematical space — and then navigates toward it, step by step.

Most image generation workflows use two text prompts simultaneously: a positive prompt describing what you want, and a negative prompt describing what you don’t want. The model uses the contrast between these two conditionings during every denoising step, actively steering toward the positive and away from the negative. This technique is called Classifier-Free Guidance.

03 — COMPRESSION

The VAE: Working in a Compressed Reality

Here’s a practical problem: operating directly on full-resolution pixel images would require enormous computational resources for every denoising step. A single 1024×1024 image contains over a million pixels, each with three color channels. Running a neural network over all of that, hundreds of times per image, would be prohibitively slow.

The Variational Autoencoder (VAE) solves this elegantly. It compresses images into a much smaller latent space — typically 8× smaller in each dimension. A 1024×1024 pixel image becomes a 128×128 latent representation. The VAE encoder learns to preserve the essential structural and color information while stripping out redundancy.

All of the actual diffusion work happens in this compressed latent space. It’s only at the very end of the pipeline that the VAE decoder expands the latent back into a full-resolution pixel image. The quality of the VAE significantly affects final output — particularly color accuracy, fine detail rendering, and the crispness of edges. Swapping in a higher-quality VAE can visibly improve skin tones, text rendering, and micro-detail.

04 — THE CANVAS

The Latent Image: Starting from Pure Chaos

With the VAE established, we need a starting point. That starting point is pure random noise.

When you specify your desired image dimensions and batch size, the system creates a tensor of completely random values in latent space — meaningless static, mathematically speaking. This is your blank canvas. Not blank in the sense of white or empty, but blank in the sense of all possibilities simultaneously.

The seed number you choose determines exactly what the initial noise looks like. The same seed, same settings, same model will always produce the same image — making results perfectly reproducible. Changing the seed is like shuffling the deck before the deal.

The entire goal of what follows is to transform this noise into something coherent and beautiful, guided by your text conditioning. Think of it like a sculptor discovering a figure hidden inside a block of marble — except the sculptor is a neural network, and it works in reverse, removing confusion rather than stone.